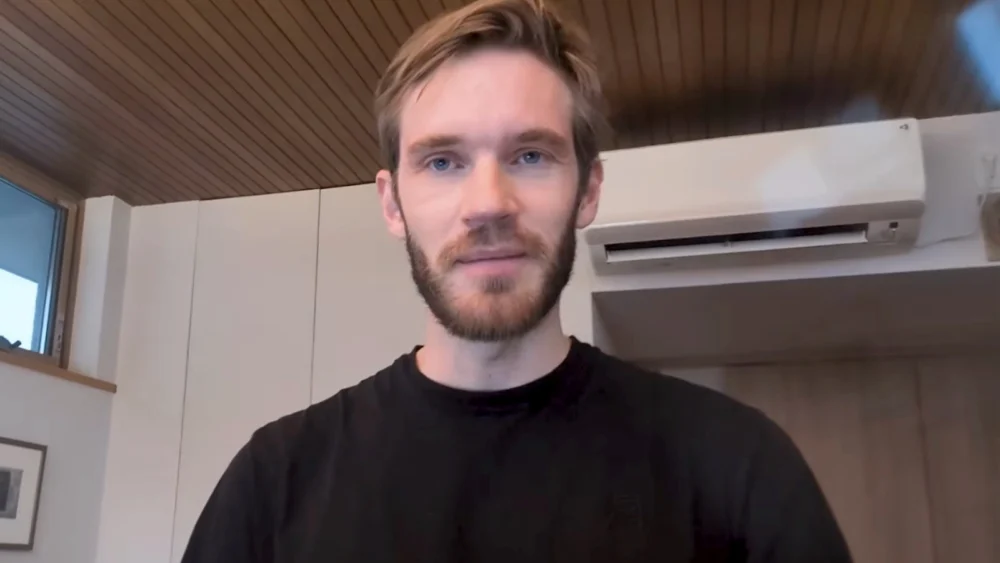

Felix Kjellberg, the globally recognized content creator known as PewDiePie, has unveiled a comprehensive technical project documenting his transition from digital entertainment to machine learning engineering. In a detailed video presentation, Kjellberg revealed that he has spent several months developing, training, and testing a custom artificial intelligence model specifically optimized for software development. The project, which was framed as a personal learning challenge, culminated in a claim that his fine-tuned model briefly surpassed the performance of OpenAI’s ChatGPT on a specific coding benchmark. This development highlights a growing trend of high-level hobbyists and independent researchers leveraging open-source foundations to challenge the dominance of multi-billion-dollar technology corporations.

The initiative began not as an attempt to construct a foundational large language model (LLM) from the ground up—a feat that requires thousands of specialized GPUs and massive capital—but rather as a sophisticated fine-tuning project. Kjellberg clarified that his work involved taking an existing, high-performing open-source model and applying custom datasets and specific training methodologies to enhance its proficiency in generating and debugging code. The creator’s pivot into the technical nuances of machine learning follows his recent public reflections on the value of his time, during which he expressed a desire to move away from traditional gaming content in favor of intellectually demanding pursuits.

The Technical Methodology of Fine-Tuning and Optimization

The process of fine-tuning an AI model involves adjusting the weights of a pre-trained LLM using a smaller, highly curated dataset. For Kjellberg, the objective was to improve performance within the specific constraints of coding agents—autonomous or semi-autonomous AI systems designed to write and execute software. The training was conducted using a coding-focused benchmark to measure progress, comparing the results against industry leaders such as Meta’s Llama series, DeepSeek, and OpenAI’s GPT-4 architecture.

Kjellberg’s journey was characterized by an iterative "trial and error" approach. Initial testing yielded poor results, with the model scoring a mere 8% on the selected coding benchmark. This baseline performance was significantly lower than that of the top-tier models currently available on the market. However, through multiple rounds of retraining and the introduction of "reasoning data"—data designed to teach the model the step-by-step logic required to solve complex problems—the performance began to climb.

The breakthrough occurred after the creator implemented format adjustments and more rigorous dataset curation. One specific training run reportedly reached a benchmark score of 19.6%. According to Kjellberg, this score was momentarily higher than the performance of ChatGPT on the same benchmark at that specific point in time. This milestone served as a proof of concept that individual developers could, with enough persistence and computational resources, achieve performance metrics competitive with enterprise-grade models in specialized niches.

Overcoming Benchmark Contamination and Data Integrity Issues

A significant portion of Kjellberg’s report focused on the scientific rigors and setbacks inherent in AI development. Shortly after achieving the 19.6% score, he discovered a critical flaw known as "benchmark contamination." In machine learning, contamination occurs when the data used to train the model accidentally includes the questions or solutions from the benchmark used to test it. This leads to artificially inflated scores, as the model is essentially "memorizing" the answers rather than "learning" the underlying logic.

Demonstrating a commitment to technical integrity, Kjellberg invalidated his own results upon discovering the overlap. He explained that the training dataset contained snippets of code that were too similar to the benchmark’s evaluation criteria. To rectify this, he moved to a coding-specific version of a base model and overhauled the training data to ensure a "clean" test.

Following the retraining process, the model’s performance saw a dramatic and legitimate increase. After fixing the contamination issues and applying post-training adjustments, the model’s score rose to 36%, eventually peaking at 39.1%. This progression illustrates the steep learning curve associated with data preparation and the importance of objective validation in the field of artificial intelligence.

Hardware Constraints and the Physical Reality of AI Training

Beyond the software and algorithmic challenges, Kjellberg’s project highlighted the significant hardware demands of modern AI development. Training even a fine-tuned model requires immense computational power, typically provided by high-end Graphics Processing Units (GPUs). Kjellberg described a heavily modified hardware setup that pushed the limits of consumer and semi-professional electronics.

The development process was plagued by technical failures, including system crashes and severe overheating. The creator reported that the high computational load led to the failure of at least one GPU and created ongoing issues with power cables and electrical circuit limits. In a domestic environment, the electrical draw required to run multiple high-end GPUs at full capacity for weeks on end often exceeds standard residential capacity, requiring specialized cooling solutions and power management.

Kjellberg noted that he had to repeatedly rebuild portions of his system to maintain the training schedule. These hardware struggles provide a realistic look at the "garage-built" nature of independent AI research, contrasting with the climate-controlled, industrial-scale data centers used by firms like Google or Anthropic.

Comparative Performance and the Rapid Evolution of AI

While the 39.1% score represents a significant achievement for an independent project, Kjellberg remained pragmatic about the model’s standing in the broader ecosystem. He acknowledged that while his model outperformed certain versions of ChatGPT on a specific benchmark, the AI field moves at an unprecedented pace. During the months he spent developing his model, newer architectures such as Qwen 3 emerged, which have already set higher records on the same benchmarks.

The comparison between Kjellberg’s model and OpenAI’s ChatGPT is particularly notable because it underscores the difference between general-purpose models and specialized ones. ChatGPT is designed to be a "jack-of-all-trades," capable of writing poetry, summarizing text, and coding. By focusing exclusively on coding and using specialized reasoning data, Kjellberg was able to squeeze higher performance out of a smaller architecture for a specific task—a strategy that is becoming increasingly popular in the corporate world for reducing the cost and latency of AI deployments.

Broader Implications for the Tech Industry and Creator Economy

The success of this project carries several implications for the future of both the tech industry and the creator economy. First, it demonstrates the democratization of high-level technology. Only a few years ago, the idea of a single individual training an AI model to compete with Silicon Valley giants would have been dismissed as impossible. Today, the availability of open-source weights (such as Meta’s Llama) and sophisticated fine-tuning libraries (such as Hugging Face) has lowered the barrier to entry for talented individuals.

Second, the project signifies a shift in the "influencer" landscape. As the first generation of major YouTubers matures, many are seeking to leverage their platforms and resources to contribute to more technical or philanthropic fields. Kjellberg’s transition from a gaming personality to a self-taught AI researcher may serve as a blueprint for other creators looking to apply their wealth and influence to complex global challenges.

Finally, the project highlights the critical role of data quality over data quantity. Kjellberg’s ability to jump from 8% to 39.1% was not the result of simply throwing more data at the model, but rather refining the quality and format of that data. This aligns with current industry trends where "small language models" (SLMs) trained on high-quality, synthetic reasoning data are beginning to rival the capabilities of much larger, "brute-forced" models.

Future Prospects and the Path to Public Release

As the video concluded, Kjellberg remained undecided on whether he would release the model to the public. He emphasized that the project was primarily a vehicle for learning through experimentation and failure. Before any potential release, he expressed a desire to conduct further testing against a broader array of coding benchmarks to ensure the model’s performance is generalized and not limited to a single test environment.

The creator’s journey into machine learning is far from over. Whether he continues to iterate on this specific coding model or moves on to other facets of AI, such as computer vision or robotics, the project has already served its purpose as a high-stakes educational endeavor. For the broader tech community, it serves as a reminder that the next major breakthrough in AI might not come from a corporate boardroom, but from a dedicated individual with the resources and the will to experiment.

Kjellberg’s foray into AI training reflects a broader societal shift toward technical literacy in the age of automation. By documenting his failures—ranging from benchmark contamination to melted hardware—he has provided his audience with a transparent look at the difficulties of modern engineering, demystifying a field that is often shrouded in corporate secrecy. As AI continues to integrate into every facet of the global economy, the contributions of independent developers and high-profile hobbyists will likely play a crucial role in shaping the transparency and accessibility of the technology.